L'analyse du transcriptome consiste à mesurer simultanément l'expression de tous les gènes d'un génome. Elle est utilisable dans tous les problèmes où l'on suivait classiquement l'expression de quelques gènes (comparaison de plusieurs génotypes ou de plusieurs traitements, cinétique, etc.).

![]() Bibliographie

sur des exemples de variation d'expression

Bibliographie

sur des exemples de variation d'expression

La technologie qui prédomine est basée sur les puces à ADN. Une puce à ADN est constituée d'un support physique (le plus souvent une lame de verre) sur lequel sont déposées des molécules d'ADN correspondant à de petits fragments du génome (jusqu'à 40 000 dépôts différents par puce en 2007). On recouvre la puce de la solution contenant la population d'ARN à étudier. Les ARN s'hybrident sur les fragments d'ADN complémentaires. La quantité d'ARN fixée reflète la concentration de cet ARN dans la solution. Il peut exister des biais systématiques dus à d'autres facteurs, tels que l'affinité des séquences ou l'efficacité du marquage.

Pour des raisons pratiques, on utilise des ADNc plutôt que directement les ARN. Les ADNc sont marqués par un nucléotide radioactif ou un fluorochrome. Il est possible d'étudier simultanément plusieurs populations d'ADNc sur une même puce en utilisant des fluorochromes différents. La meilleure façon d'utiliser cette possibilité est de marquer de l'ADN génomique avec un fluorochrome, toujours le même. On obtient ainsi une référence stable au cours des années qui permet de mettre toutes les puces à la même échelle, quelle que soit leur origine.

Un scanner mesure l'intensité du signal émis par l'ADNc hybridé au niveau de chaque dépôt. Parmi les valeurs que proposent les logiciels pour cette intensité, la plus fiable est la médiane de l'intensité des pixels car elle est moins sensible aux défauts de l'image (pixels sur-brillants par exemple).

Les puces comportent généralement plusieurs dépôts identiques pour chaque gène. Cela simplifie le travail lorsqu'il faut repérer les aberrations dans la lecture des intensités puisqu'il suffit d'examiner les cas où les valeurs diffèrent beaucoup d'un dépôt à l'autre. Il s'agit le plus souvent d'un défaut physique sur la puce et il est facile d'éliminer la valeur aberrante. Dans le doute, on conserve la médiane des différentes mesures.

![]() Pour

en savoir plus sur les puces

Pour

en savoir plus sur les puces ![]() Bibliographie

sur les puces

Bibliographie

sur les puces

On appellera :

• expérience l'ensemble du tableau de chiffres à analyser,

• facteur un paramètre de l'expérience (un facteur de croissance, le jour de l'expérience, etc.),

• état du facteur une des valeurs qu'il peut prendre (présence ou absence du facteur de croissance, jour A, B ou C, etc.),

• condition expérimentale une combinaison particulière des états des facteurs. Une condition expérimentale correspond à une colonne du tableau de chiffres à analyser.

Les lignes du tableau correspondent aux gènes (ou à des objets apparentés tels que les EST). Une case du tableau contient une valeur qui représente, peu ou prou, le niveau d'expression d'un gène donné dans une condition expérimentale donnée.

Note : Tout ce qui est dit ici sur l'exploitation des expériences de transcriptome peut être appliqué, mutatis mutandis, aux expériences sur le protéome et le métabolome.

L'analyse du transcriptome apporte des informations statistiques qui ne prennent un sens qu'au bout d'un nombre suffisant de répétitions.

Dans une expérience de transcriptome, il est courant de constater qu'entre deux conditions expérimentales, le niveau d'expression varie notablement pour au moins 10 % des gènes. La liste est trop longue pour être exploitable concrètement. Il est nécessaire d'en extraire les gènes pertinents. Le travail est grandement facilité par une organisation adéquate des expériences. Seul un plan d'expérience bien pensé peut restreindre efficacement la liste des gènes candidats.

Cette situation permet de tirer le maximum d'informations de l'expérience pour un travail minimal. C'est un idéal dont il faut s'approcher autant que faire se peut.

Une expérience comprend trois types de facteurs, chacun apportant une information spécifique.

Le premier facteur correspond au phénomène étudié. L'étude peut porter sur deux états ou plus (deux conditions de culture par exemple ou plusieurs prélèvements au cours d'une cinétique). La situation est idéale lorsque le passage d'un état à l'autre modifie le niveau d'expression de très peu de gènes. Ainsi, par construction, la liste des gènes candidats sera courte. Typiquement, il y a plus à apprendre de la comparaison de deux maladies apparentées que de la comparaison d'un malade et d'une personne en bonne santé. En effet, les modifications du métabolisme ne sont pas toutes caractéristiques de la maladie. Il suffit pour s'en convaincre de penser à la fièvre: c'est une réaction associée à de nombreuses maladies. Malgré tout, même en prenant des précautions, il est impossible d'éviter que les gènes qui ont une relation indirecte avec le phénomène étudié polluent la liste (les gènes impliqués dans la synthèse de métabolites précurseurs, par exemple).

Le deuxième type de facteur a pour objectif de vérifier que les observations restent vraies lorsque les paramètres biologiques varient. Retrouve-t-on les mêmes changements de niveau d'expression quand l'expérience est reproduite un autre jour ? Les gènes se comportent-ils de la même façon dans différentes lignées ? . Les gènes candidats les plus intéressants sont ceux qui répondent de la même dans tous les cas (leur comportement est reproductible). Ils sont probablement au cour du phénomène étudié puisque leur comportement n'est pas limité à un contexte particulier (génétique, physiologique.). A minima, le deuxième facteur correspond à la variabilité biologique introduite par la répétition de l'expérience au cours du temps. Il est en effet quasi-impossible de réobtenir strictement les mêmes conditions physiologiques. Mais le plan d'expérience est plus efficace quand le deuxième type de facteurs est décomposé : dates, lignées, etc.

Le troisième type de facteurs correspond aux aspects techniques (protocole de marquage des ADNc, dépôt sur la puce à ADN, etc.). Ce type de facteur peut entraîner un alourdissement des expériences (dye swap) sans apporter une information biologiquement pertinente. Les artefacts techniques ne sont pas graves en soi puisque l'analyse porte sur les changements de niveau et qu'elle n'est que semi-quantitative. Les protocoles sont devenus très reproductibles et il suffit de s'en tenir à un, tout en ayant conscience qu'il présente des biais systématiques.

![]() Pour en savoir plus sur le Dye Swap

Pour en savoir plus sur le Dye Swap

![]() Bibliographie

sur la comparaison des différents protocoles

Bibliographie

sur la comparaison des différents protocoles

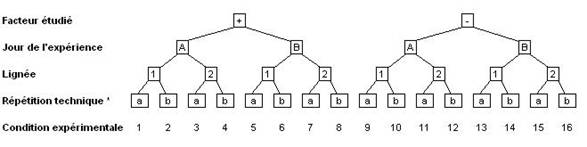

Pour chaque facteur, il existe deux états ou plus. L'expérience est complète si toutes les combinaisons d'états ont été effectivement mesurées. C'est à dire que le tableau des résultats complet contiendra N = n1× ... × nk× ... × nF colonnes si F est le nombre de facteur et nk le nombre de conditions pour le facteur k. La configuration typique d'une expérience complète est représentée Figure 1. Dans cet exemple, elle comporte seize colonnes.

Il est important de noter qu'une répétition biologique implique que toute la procédure expérimentale est refaite en entier à chaque fois. Dans l'exemple de la Figure 1, ceci veut dire que les deux conditions de milieu et les deux lignées ont été testées le jour A et le jour B et qu'il n'y a qu'un seul expérimentateur. Faire deux fois la lignée 1 le jour A et deux fois la lignée 2 le jour B est un non-sens car il ne sera pas possible de séparer l'effet du jour et l'effet de la lignée.

Deux échantillons sont homogènes lorsque le nombre d'individus dans chaque échantillon est suffisant pour gommer les variabilités individuelles. De la sorte, les conclusions sont valables pour l'ensemble de la population. L'échantillon est représentatif. Lorsque des raisons techniques conduisent à préparer les extraits individu par individu, on peut faire la moyenne des mesures individuelles ou mélanger les extraits avant de procéder au marquage. Les deux façons de faire donnent le même résultat. La seconde est plus économique

Chercher les gènes dont l'expression dépend de deux facteurs à la fois est une question trop vague pour qu'on puisse avoir une réponse satisfaisante. En effet, la formulation la plus générale d'une interaction revient à dire que la combinaison des états de deux facteurs donne des résultats imprévisibles. Cette définition recouvre le cas où les observations ne sont pas reproductibles !

Une étude de ce type est inéluctablement lourde. En plus des deux facteurs étudiés, il faut introduire au minimum un troisième facteur (répétition biologique.) pour être sûr que les observations sont reproductibles et biologiquement significatives.

C'est typiquement le cas des études cliniques car elles dépendent beaucoup du hasard des recrutements à l'hôpital. L'objectif du chercheur est de s'approcher autant que possible du plan d'expérience décrit ci-dessus.

Dans le cas le plus simple, les malades peuvent être regroupés de façon à former des catégories homogènes. On retombe alors dans le cas précédent en utilisant la moyenne des mesures individuelles pour chaque condition expérimentale. Les essais multicentriques remplacent la répétition biologique. Ils permettent d'éliminer les gènes dont l'expression serait propre à un fond génétique ou socioéconomique particulier.

Le problème est radicalement différent lorsque l'étude a pour objectif de découvrir des sous-types de la maladie. Dans ce cas, les malades ne peuvent pas être regroupés dans des catégories homogènes définies a priori . L'analyse statistique va chercher tout à la fois à regrouper les malades en un petit nombre de catégories et à lister les gènes dont l'expression est caractéristique de chaque catégorie. Le problème est qu'une telle analyse donne toujours un résultat. Elle aboutit inéluctablement à une liste de gènes potentiellement caractéristiques de plusieurs catégories de malades. L'étape clé va être la validation sur d'autres personnes afin de s'assurer de la fiabilité de la liste et de la réalité des catégories. La validation nécessite des effectifs importants. Il est prudent de compter cinq à dix fois plus de personnes par catégories de malades que de gènes candidats.

Il est aisé d'obtenir des gènes « diagnostiques » qui donnent d'excellents résultats au cours de la validation si les échantillons sont trop petits. Mais dans ce cas la fiabilité des résultats est illusoire et la recherche qui en découle risque fort d'être orientée sur de fausses pistes.

L'analyse du transcriptome apporte une information partielle. Elle donne une image des changements de niveau d'expression entre deux états. Mais la relation causale entre le changement d'état et le changement de niveau d'expression est indirecte pour la plupart des gènes. En fait, les changements d'état physiologique sont souvent déclenchés par l'expression transitoire d'un gène ou de quelques gènes. C'est un instant qui a peu de chance d'être saisi au cours d'une analyse de transcriptome. Identifier le ou les gènes responsables du phénomène étudié nécessite des informations complémentaires.

Une solution est d'étudier la cinétique du phénomène en mesurant l'expression des gènes à des différents temps à condition de multiplier les points de mesure pour déceler les évènements transitoires. Cette approche conduit à des expériences très lourdes car il est indispensable de répéter la cinétique plusieurs fois pour être sûr que les changements de niveau d'expression ont une réalité biologique.

Les informations provenant des séquences (motifs fonctionnels, ontologies.) et des bases de données métaboliques sont aussi très utiles. Il faut que la fonction probable des gènes au vu de leur séquence soit compatible avec le rôle suggéré par l'analyse du transcriptome.

![]() Bibliographie

sur le recoupement des sources d'informations

Bibliographie

sur le recoupement des sources d'informations

Que le marquage soit radioactif ou fluorescent, la lecture des puces à ADN fait appel à des techniques bien connues. Il n'empêche que c'est une importante source d'erreur car la dynamique du signal est élevée, l'intensité du marquage difficile à contrôler et les signaux faibles en partie cachés par le bruit de fond.

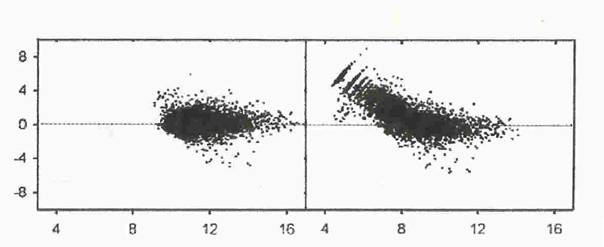

Il est fréquent que l'étendue des intensités déborde le domaine où le système de lecture a une réponse linéaire. Dans ce cas, la mesure est faussée pour les valeurs les plus faibles ou les plus fortes. Ceci conduit à des aberrations caractéristiques sur les graphiques où l'on compare les mesures faites dans deux conditions différentes (Figure 2) : le nuage des gènes est courbé et peut même présenter des stries pour les faibles intensités. En revanche, le nuage forme un cigare droit et homogène lorsque l'acquisition du signal est correcte (voir ci-dessous le chapitre Méthodes graphiques exploratoires pour une présentation détaillée du nuage des gènes).

Les erreurs de lecture peuvent être plus ou moins bien corrigées par un traitement statistique. De nombreuses méthodes permettent notamment de redresser le nuage. Ce n'est qu'un pis-aller qui ne remplace jamais une mesure correcte. La bonne solution est de prévoir sur la puce à ADN des dépôts correspondant à une gamme de concentration pour régler le système de lecture avant de procéder aux mesures. Il faut recourir à deux lectures avec des réglages différents dans le cas où la dynamique du signal excède le domaine de linéarité du système de lecture.

![]() Bibliographie

sur l'acquisition du signal

Bibliographie

sur l'acquisition du signal

Le support de la puce à ADN émet un bruit de fond dû en partie à une hybridation non spécifique. Il semble logique de supprimer ce bruit. Le problème est complexe et les options proposées par les logiciels de traitement d'image souvent simplistes. Il n'est pas rare qu'elles dégradent la qualité des mesures au lieu de l'améliorer. La correction est efficace lorsque la différence entre les deux dépôts d'un même gène est plus faible en moyenne après la correction.

En réalité le bruit de fond affecte peu l'analyse des résultats puisque celle-ci revient à examiner la position des gènes les uns par rapport aux autres dans le nuage. Sous l'effet du bruit de fond, les gènes bougent un peu, mais pas au point de bouleverser le nuage. L'effet n'est sensible que pour les plus bas niveaux d'expression, dans un cas où de toute façon les mesures sont très imprécises.

Les sources de variabilités incontrôlées sont nombreuses et une préparation d'ARNm a peu de chance de donner les mêmes résultats si elle est testée sur deux puces à ADN : il n'y a pas de raisons pour que le marquage soit exactement le même d'une fois sur l'autre ou que le système de lecture soit réglé exactement de la même façon.

Ce sont des biais qui affectent tous les gènes de la même façon et le problème est résolu en donnant la même moyenne et la même variance à toutes les conditions expérimentales (c'est-à-dire toutes les colonnes dans le tableau de résultats). D'un point de vue statistique, cette opération revient à leur donner le même poids dans les analyses ultérieures. D'un point de vue biologique, c'est faire l'hypothèse que la quantité totale d'ARNm dans les cellules est constante dans l'expérience. L'hypothèse est solide pour une puce génomique car l'expression de la plupart des gènes ne change pas dans une expérience. Elle est à vérifier au cas par cas avec les puces spécialisées contenant peu de gènes. Certains auteurs préfèrent utiliser une estimation robuste de la moyenne et de la variance basée sur les quartiles (Encart 1). Cette façon de faire est moins sensible aux valeurs extrêmes (anormalement basses ou anormalement élevées), et parfois mieux adaptéeà la distribution des valeurs d'expression.

La distribution du niveau d'expression des gènes est très asymétrique avec un petit nombre de valeurs élevées. C'est une source de problèmes car de nombreuses méthodes statistiques supposent implicitement une distribution gaussienne. L'asymétrie est fortement diminuée si les données brutes sont remplacées par leur logarithme ou par leur racine cinquième. La transformation logarithmique est la plus utilisée. Après transformation, les méthodes statistiques peuvent être utilisées en toute confiance.

La transformation logarithmique présente l'inconvénient d'amplifier les écarts des petites valeurs : après le passage au logarithme, 0,1 et 1 deviennent aussi éloignés que 100 et 1000. Le nuage des gènes s'évase considérablement pour prendre une forme en trompette (Figure 3). Cette figure très fréquente dans les publications est un pur artefact dû à la transformation logarithmique de valeurs proches de zéro. Nota bene, les valeurs proches de zéro résultent habituellement de la soustraction du bruit de fond.

Des traitements mathématiques permettent de limiter l'ampleur de cette déformation. Le problème peut être évité en ajoutant une constante de sorte que la valeur la plus faible soit aux environs de la centaine.

Bibliographie sur la

normalisation ![]()

Les dépôts correspondant à chaque gène ne sont pas toujours reproductibles d'une puce à l'autre (à cause, par exemple, d'une concentration variable de la solution d'ADN qui est déposée). Il est possible de corriger ce biais et de mettre toutes les puces à la même échelle à condition de les hybrider toutes avec la même référence.

La meilleure référence est l'ADN génomique marqué toujours avec le même fluorochrome car elle est parfaitement stable dans le temps. L'utilisation de l'ADN génomique allège considérablement les plans d'expérience en supprimant notamment le dye swap et permet de mettre à la même échelle des puces qui ont été fabriquées à des années d'écart.

L'expérience montre que la PCR quantitative donne les mêmes résultats que les puces à ADN lorsqu'on utilise les mêmes sondes. Une expérience réalisée avec des PCR quantitatives est donc l'équivalent d'une analyse de transcriptome menée avec une puce à ADN comportant peu de gènes.

Par conséquent, toutes les méthodes d'analyse développées pour les puces à ADN s'appliquent à la PCR quantitative, et en particulier la nécessité d'une transformation logarithmique (pour rendre les distributions gaussiennes) suivie d'une transformation linéaire pour amener la moyenne à zéro et la variance à un pour chaque condition expérimentale. Dans le cas de la PCR quantitative, ceci revient à appliquer la transformation linéaire directement sur les Delta Ct (Ct = nombre de cycles d'amplification à partir duquel la fluorescence est détectée ; Delta Ct = Ct gène étudié - Ct gène référence).

![]() Bibliographie

sur la PCR quantitative

Bibliographie

sur la PCR quantitative

Les valeurs manquantes posent un problème car la plupart des analyses nécessitent un tableau de chiffres complet. Les valeurs manquantes ont deux origines : (i) un défaut rend la mesure impossible pour un gène sur une puce à ADN ; (ii) la mesure est éliminée car elle n'est pas notablement supérieure au bruit de fond.

Eliminer un gène lorsqu'il lui manque une valeur dans une colonne n'est pas une solution car le problème va se poser de nouveau pour d'autres gènes dans d'autres colonnes si le nombre de conditions expérimentales augmente. On considère généralement qu'il vaut mieux remplacer la valeur manquante par une valeur estimée à partir des mesures faites dans d'autres conditions expérimentales.

En revanche, supprimer une valeur bruitée, mais réelle, pour la remplacer par une valeur estimée est un contre-sens. On perd l'information qui était plus ou moins noyée dans le bruit de fond sans rien gagner en contre-partie. C'est pourtant ce que l'on fait lorsqu'on élimine les valeurs voisines du bruit de fond.

Plusieurs techniques, dont la régression linéaire, permettent de calculer les valeurs estimées. Elles n'ont pas toutes la même précision. On peut estimer empiriquement la qualité d'une méthode en supprimant quelques valeurs dans le tableau de départ puis en comparant les valeurs prédites aux valeurs initiales.

Bibliographie sur le traitement

des valeurs manquantes ![]()

On fait appel aux statistiques pour répondre à la question : les différences d'expression observées sont-elles bien réelles ? La réponse est indirecte, les statistiques donnent la probabilité pour qu'on ait affaire à un faux-positif (la p-value ). Un faux-positif correspond au cas d'un gène où la différence observée dépasse par hasard un seuil fixé à l'avance. « Par hasard » signifie qu'en général on ne retrouverait pas une différence aussi grande si l'expérience était répétée.

Comme une expérience de transcriptome porte sur des milliers de gènes simultanément, l'analyse statistique est utilisée pour évaluer le nombre probable de faux-positifs au-delà d'un seuil donné : 40 gènes ont une p-value inférieure ou égale à 1 % par hasard si l'expérience porte sur 4 000 gènes alors que c'est le cas de 400 gènes si l'expérience porte sur 40 000 gènes.

L'estimation du nombre de faux positifs n'est qu'une première étape dans le raisonnement lorsqu'on fixe le seuil qui va définir l'ensemble des gènes à étudier. En effet, on trouve au-delà du seuil à la fois des faux positifs et des gènes pour lesquels la différence observée est bien réelle (c'est-à-dire qu'on retrouverait ces gènes dans une autre expérience).

L'information clé est la proportion de faux positifs dans l'ensemble des gènes sélectionnés car elle mesure le risque de se lancer dans une fausse piste si on décide de travailler sur un gène pris dans cet ensemble. C'est le False Discovery Rate (FDR). Habituellement le seuil est fixé de sorte qu'il n'y ait pas plus de 5 % de faux-positifs dans le lot de gènes sélectionnés (FDR = 5 %).

Par exemple, prenons une expérience portant sur 4 000 gènes dont 80 gènes ont une p-value inférieure ou égale à 0,1 % (p=0,001) qui sont les gènes retenus comme potentiellement intéressants. Sur 4000 gènes au départ, il y a environ 4 faux positifs (4000 × 0,001). Sur les 80 gènes retenus, il y en a donc environ que 4 qui par hasard présentent une p-value inférieure ou égale à 0,001. Plus précisément, le pourcentage de faux positifs (FDR) est de 4 / 80, soit 5 % des gènes sélectionnés.

La littérature fait parfois référence à la correction de Bonferroni. Cette correction n'est pas pertinente pour l'analyse du transcriptome car elle est exagérément restrictive.

Le critère numérique utilisé dans un test statistique est toujours le rapport des écarts observés pour le facteur intéressant (le signal) sur ceux qui sont dus à l'ensemble des causes qu'on néglige (le bruit). Les tests statistiques se distinguent par la façon de définir le bruit et par la loi utilisée pour estimer la probabilité des faux-positifs.

Plusieurs grandeurs sont utilisées simultanément pour décider si l'expression d'un gène varie de façon significative pour le facteur étudié :

• V1, la variance de l'ensemble des observations faites sur le gène,

• V2, la variance des observations pour le facteur étudié (ou une grandeur apparentée comme l'écart entre les deux moyennes dans le cas où le facteur n'a que deux états),

• V3, la variance des observations pour les facteurs dont on souhaite soustraire l'influence.

Le bruit est égal à V1 - (V2 + V3) et le signal à V2. La possibilité de calculer le terme V3 est une spécificité de l'analyse de variance (ANOVA), elle permet de contrôler plus finement la composition du bruit. Dans l'exemple du plan d'expérience de la Figure1, V3 correspond aux changements de niveau d'expression induits par le jour, la lignée et les problèmes techniques. Et le bruit recouvre tout ce qui fait que le niveau d'expression réel diffère de la simple addition des effets du facteur étudié, du jour, de la lignée et des problèmes techniques.

La difficulté majeure est de cerner le bruit avec précision tout en l'évaluant sur suffisamment de données. L'approche la plus sûre est de répéter l'expérience un grand nombre de fois. Ce n'est pas toujours possible et le nombre d'observations est souvent inférieur à 20. Les statisticiens cherchent alors à améliorer l'estimation du bruit en travaillant sur des groupes de gènes qui présentent à peu près le même niveau de bruit. De nombreuses solutions sont possibles, aucune n'est parfaite. Généralement, les regroupements sont faits a posteriori, après une première estimation du bruit pour tous les gènes séparément. On parle d'approche bayesienne .

Les méthodes se distinguent aussi par la distribution statistique du bruit. Elles prennent soit une distribution définie a priori (le plus souvent la gaussienne) soit une distribution estimée par permutation à partir de l'échantillon (les couples valeurs observées × conditions expérimentales sont constitués au hasard).

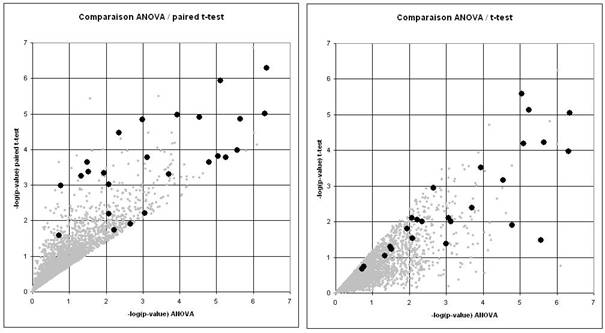

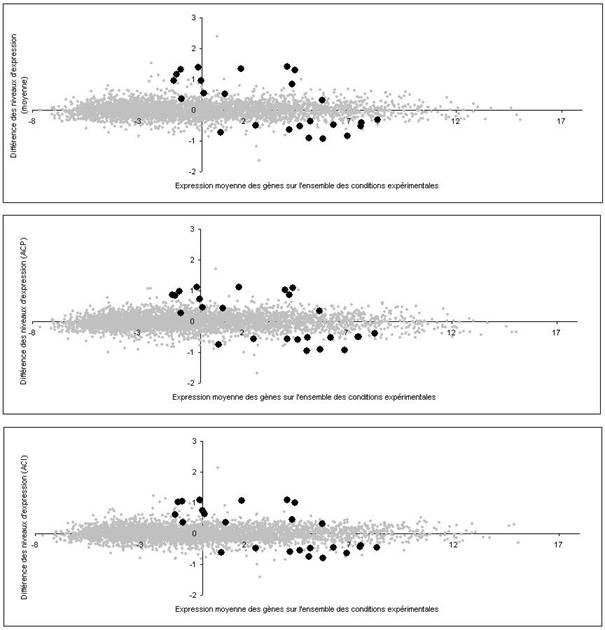

Les différentes méthodes ne mesurent pas exactement la même chose puisqu'elles n'évaluent pas le bruit de la même façon. Il est naturel qu'elles ne donnent pas exactement les mêmes résultats (Figure 4).

Les meilleures méthodes ont des sensibilités équivalentes en moyenne, mais différentes au cas par cas. Elles apportent des informations partiellement complémentaires. Il est logique de comparer leur résultat. Par contre, il ne faut pas se limiter à la liste des gènes significatifs avec toutes les méthodes. En effet, cela reviendrait à choisir pour chaque gène la méthode la moins sensible. En d'autres termes, il faut travailler sur l'union des listes pas sur leur intersection.

Le choix du test statistique joue un rôle secondaire dans la finesse des analyses. Celle-ci dépend avant tout du plan d'expérience car c'est lui qui permet d'éliminer une grande partie du bruit (voir ci-dessus le chapitre Concevoir un plan d'expérience ).

Bibliographie sur les statistiques ![]()

Les résultats d'une expérience de transcriptome forment un tableau de K colonnes, les conditions expérimentales ou les patients, et L lignes, les gènes. Il y a habituellement plusieurs milliers de gènes et quelques dizaines de colonnes. La cellule lk contient la valeur observée pour le gène l dans la condition k (son niveau d'expression) En d'autres termes, l'expérience peut être représentée par un unique nuage de L points (un par gène) dans un espace ayant K dimensions (une par condition expérimentale). La cellule lk est la coordonnée du gène l dans la condition k et la ligne l correspond aux coordonnées du gène l dans l'espace de l'expérience.

Un seul coup d'oil donnerait une vision complète de l'expérience si nous pouvions nous représenter un objet dans un espace à K dimensions. Mais nous sommes limités à deux dimensions (trois avec des astuces graphiques) et il va falloir aborder le nuage par plans successifs. L'idée simple qui consiste à faire tous les graphiques en prenant les conditions expérimentales deux par deux n'est pas réaliste car le nombre de graphiques est généralement très grand (il y a K×(K-1) / 2 graphiques, c'est-à-dire 120 graphiques rien que pour les seize conditions expérimentales de la Figure 1). Il n'est pas possible de les analyser tous et, de toute façon, chacun d'eux ne contient qu'une toute petite partie de l'information. Il est nécessaire de regrouper astucieusement les conditions expérimentales pour aboutir à un petit nombre de graphiques réellement pertinents.

Nota bene , dans tout ce qui suit, on appelle « niveau d'expression » la valeur normalisée de l'intensité lue par le photomultiplicateur (la normalisation correspond à une transformation logarithmique suivie d'une transformation linéaire pour amener la moyenne à zéro et la variance à un pour chaque condition expérimentale).

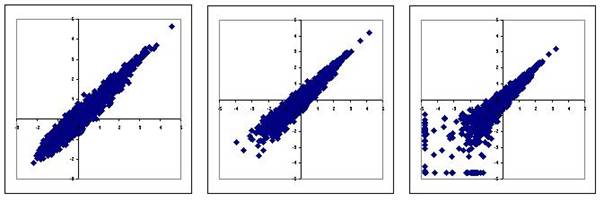

Une représentation classique consiste à mettre en abscisse le niveau moyen d'expression dans l'ensemble de l'expérience et en ordonnée la différence des niveaux d'expression entre deux états différents (Figure 5, image du haut). Dans l'idéal, si les observations étaient parfaitement reproductibles, l'ordonnée serait égale à zéro pour les gènes dont le niveau d'expression ne varie pas dans l'expérience : ils seraient alignés sur une droite D . En réalité, le nuage des gènes ne forme pas une droite mais un cigare très allongé car les observations sont bruitées. Le bruit correspond justement à l'épaisseur du nuage.

Les gènes dont le niveau d'expression a changé sont en dehors de la droite D . Leur distance à la droite est proportionnelle à la différence des niveaux d'expression. Ils sont faciles à repérer lorsqu'ils figurent à la périphérie du nuage, c'est-à-dire quand le rapport signal sur bruit est élevé (le signal étant la distance à la droite D et le bruit l'épaisseur moyenne du nuage au même endroit).

L'image du haut de la Figure 5 est simple à construire puisqu'elle donne le même poids à toutes les conditions expérimentales. Mais ce choix qui n'est pas pertinent lorsque certaines conditions expérimentales apportent plus d'information que d'autres (par exemple des mesures faites en partant de peu d'ARN sont moins précises que les autres). La meilleure solution est de remplacer la moyenne simple par une moyenne pondérée où le poids d'une condition expérimentale est proportionné à l'information qu'elle apporte. Le calcul de la pondération optimale est possible sous certaines hypothèses.

L'analyse en composantes principales (ACP) donne la solution lorsque le niveau d'expression a une distribution gaussienne (une distribution gaussienne est totalement définie par sa variance). Les figures produites par l'ACP contiennent toute l'information car elles sont calculées de telle sorte que la variance de chacune est maximale. Elles donnent une description complète de l'expérience.

Mais en réalité la distribution du niveau d'expression est souvent différente d'une gaussienne (distribution avec des écarts à la moyenne très importants, distribution asymétrique, distribution bi-modale, etc.). Dans ce cas l'ACP ne fournit pas la solution optimale. Il est préférable de la remplacer par l'analyse en composantes indépendantes (ACI). L'ACI montre le nuage sous des angles mettant en valeur les anomalies de distribution. Elle permet de déceler des phénomènes qui ont échappé avec l'ACP.

Utiliser plusieurs méthodes graphiques est équivalent à regarder le nuage sous des angles différents. Il est imaginable de voir sous un certain angle des gènes sortir du nuage alors qu'ils paraissent noyés dans la masse sous un autre angle (Figure 5). Le seul risque que l'on court avec une méthode inadéquate est rater des gènes.

En général, seule la périphérie du nuage est exploitable visuellement. L'organisation interne est cachée par la superposition de milliers de gènes sur une même image. Il est pourtant intéressant d'identifier les gènes qui sont proches les uns des autres dans le nuage car ce sont des gènes qui ont à peu près le même profil d'expression. Les biologistes posent le plus souvent une question apparentée : quels sont les gènes impliqués dans un même processus ? Y répondre revient à chercher dans le nuage des régions où la densité de gènes est anormalement forte (en d'autres termes, cela revient à chercher les amas de gènes dans le nuage).

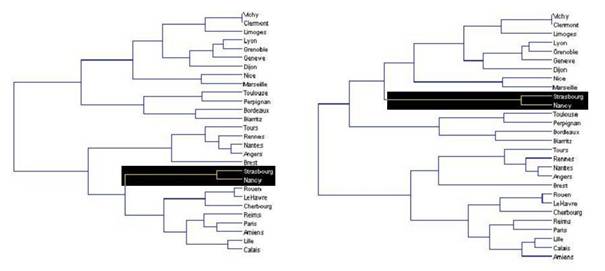

Il s'agit en réalité d'une question très générale qui se pose dans la plupart des disciplines scientifiques et qui a donné naissance à des myriades de méthodes de classification (on dit aussi clustering). Derrière leur apparente simplicité, les méthodes de clustering nécessitent toujours de fixer la valeur de plusieurs paramètres. C'est un exercice difficile car le jeu de valeurs qui donne de bons résultats dans certains cas peut s'avérer très médiocre sur d'autres données. Si toutes les méthodes donnent à peu près le même résultat quand le nuage est fragmenté avec des amas clairement séparés par des zones pratiquement vides, les résultats sont beaucoup moins fiables quand le nuage est homogène. Ils dépendent alors beaucoup de la méthode de classification utilisée et de la valeur des paramètres (Figure 6).

Faute de critères objectifs, il n'est pas rare alors que le biologiste retienne seulement les clusters qu'il sait interpréter. L'analyse du transcriptome ne lui sert alors qu'à confirmer ce qu'il savait déjà, ce qui n'est pas nécessairement le résultat le plus intéressant ! En d'autres termes, le clustering convient bien pour illustrer un résultat obtenu par une autre approche, mais ce n'est pas une bonne méthode pour découvrir quelques chose.

L'analyse serait facilitée s'il était possible de donner une image fidèle de la densité de gènes dans chaque région du nuage avec peu ( j) de points, puis de lister les gènes qui sont dans les régions où ces points sont proches les uns des autres. Une façon de faire est de redessiner le nuage en tirant au sort j gènes (par exemple un gène sur cent). C'est une solution naïve qui a peu de chance d'être satisfaisante. Une autre solution assez simple est le k-means . Elle vise à regrouper les points en k groupes aussi denses que possible (k est fixé par l'utilisateur). En fait, calculer la position optimale des j points pour donner une image fidèle des variations de densité au sein du nuage est un problème difficile. Les programmes ne proposent que des solutions approchées dont le détail dépend de plusieurs paramètres. Un exemple de cette approche est donné par SOM (Self Organizing Maps).

Une autre solution est d'agglomérer progressivement les points en commençant par ceux qui sont les plus proches dans le nuage. On parle alors de classification hiérarchique.

On peut dans le cas du transcriptome utilisé un artifice qui consiste à éliminer les gènes qui sont au cour du nuage avant de procéder à une classification. Cette élimination se fait après analyse statistique des données. Ainsi l'analyse ne porte que sur ceux dont le niveau d'expression a changé notablement au cours de l'expérience.

Dans tous les cas, il faut vérifier la stabilité de la classification obtenue. Pour cela on bruite les données initiales en les modifiant aléatoirement de 10 à 20 %, puis on relance la classification. Par recoupement, il est possible de repérer les gènes qui sont toujours classés ensembles.

![]() Bibliographie

sur les méthodes de clustering

Bibliographie

sur les méthodes de clustering

Le transcriptome est fréquemment utilisé pour identifier un état physiologique particulier (par exemple pour optimiser le traitement en fonction du sous-type de cancer). L'objectif est d'obtenir un diagnostic fiable basé sur la mesure du niveau d'expression de quelques gènes.

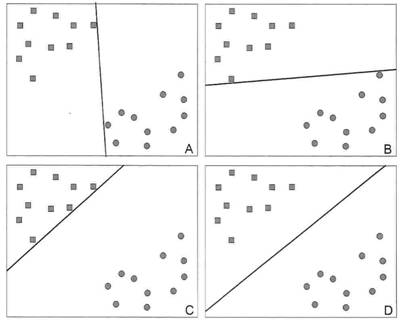

C'est un problème différent de celui traité ci-dessus. D'un point de vue géométrique, les points du nuage sont maintenant des individus. Les coordonnées d'un individu dans le nuage correspondent au niveau d'expression de ses gènes. On cherche à représenter le nuage sous un angle qui sépare le plus possible les différentes catégories d'individus (maladie 1 vs maladie 2 par exemple). En pratique, on souhaite obtenir une bonne séparation en utilisant le moins possible de gènes (ceux qui sont les plus discriminants). Il est ensuite possible de placer un nouvel individu sur la figure pour voir à quelle catégorie il se rattache.

De nombreuses méthodes traitent ce problème. Elles diffèrent notamment par la façon de tracer la frontière entre les différentes catégories d'individus (Figure 7). Dans la méthode SVM ( Support Vector Machines ou Séparatrices à Vaste Marge en français), la frontière est tracée de sorte à maximiser la largeur de la marge qui sépare les différentes catégories d'individus (image D de la Figure 7). SVM présente aussi l'avantage d'identifier les individus qui sont à la marge et qui sont, en quelque sorte, les moins typiques de leur catégorie.

![]() Pour

en savoir plus sur la méthode SVM.

Pour

en savoir plus sur la méthode SVM.

![]() Bibliographie

sur le choix des gènes

Bibliographie

sur le choix des gènes

Jusqu'ici nous avons implicitement considéré que la distance entre les gènes était le critère pertinent pour examiner leurs relations au sein du nuage. Il y a pourtant d'autres choix possibles. Par exemple si les points du nuage avaient une masse, le critère pertinent serait le carré de la distance et non pas la distance linéaire car l'attraction entre eux serait inversement proportionnelle au carré de leur distance. Dans un autre ordre d'idée, on peut imaginer que la probabilité pour que deux gènes appartiennent à la même famille fonctionnelle décroît très vite lorsque la distance entre les gènes augmente, la décroissance pouvant suivre une gaussienne, une exponentielle ou toute autre loi de probabilité.

On parle de méthode à noyau dans tous les cas où la distance linéaire est remplacée par un critère non linéaire. Cette technique augmente la puissance des méthodes d'analyse. Elle est appliquée couramment à des méthodes comme SOM et SVM. Elle peut aussi être utilisée pour l'ACP et l'ACI, qui deviennent alors des ACP et des ACI à noyau.

![]() Pour

en savoir plus sur les méthodes à noyau

Pour

en savoir plus sur les méthodes à noyau

Le plus souvent, les expériences de transcriptome sont sous-exploitées car l'expérimentateur ne s'intéresse qu'à quelques gènes ou à un facteur donné alors que les observations portent sur tous les gènes et l'ensemble des facteurs. Une exploration du nuage dans toutes les directions ouvre la voie à une exploitation beaucoup plus complète des observations. L'analyse en composantes principales et l'analyse en composantes indépendantes facilitent ce type d'approche.

Il est fréquent d'isoler ainsi des groupes de gènes dont le profil d'expression est propre à quelques patients ou quelques conditions expérimentales. La cause des changements de niveau d'expression est souvent inconnue car elle ne coïncide par avec un facteur clairement identifié dans l'expérience. Malgré tout l'observation est importante. Elle peut déboucher sur l'identification de sous-types dans une maladie ou contribuer à la découverte de réseaux de gènes.

Il est rarement possible de monter une expérience de transcriptome d'une taille suffisante pour identifier avec certitude des sous-types dans une maladie ou un réseau de gène. Cependant, on peut répondre à ces questions même si l'on ne connaît pas en détail les conditions expérimentale. C'est pourquoi la faiblesse de l'échantillon peut être compensée en exploitant les données accessibles sur le Web. Un pré-traitement statistique corrige l'hétérogénéité des données.

Vous pouvez télécharger la bibliographie complête (fichier pdf ou fichier html) en cliquant ici ou la consulter ci-dessous (lien sur tous les articles au format pdf):

Sommaire de la bibliographie

An improved physico-chemical model of hybridization

on high-density oligonucleotide microarrays

Comparison of Affymetrix GeneChip expression measures

Evaluation of methods for oligonucleotide array data

via quantitative real-time PCR..

Statistical analysis of high-density oligonucleotide

arrays: a multiplicative noise model

The high-level similarity of some disparate gene expression

measures

Adaptative quality-based clustering of gene expression

profiles

Analysing microarray data using modular regulation analysis

Cluster analysis and display of genome-wide expression

patterns

Comparisons and validation of statistical clustering

techniques for microarray gene expression data

Determination of minimum sample size and discriminatory

expression patterns in microarray data

Evolutionary algorithms for finding optimal gene sets

in microarray prediction

From co-expression to co-regulation: how many microarray

experiments do we need?

Instance-based concept learning from multiclass DNA

microarray data

Trustworthiness and metrics in visualizing similarity

of gene expression

Using repeated measurements to validate hierarchical

gene clusters

Weighted rank aggregation of cluster validation measures:

a Monte Carlo cross-entropy approach

A review of feature selection techniques in bioinformatics

Bioinformatics need to adopt statistical thinking

Comments on selected fundamental aspects of microarray

analysis

Evaluation and comparison of gene clustering methods

in microarray analysis

Evaluation of microarray data normalization procedures

using spike-in experiments

Metabolomics in systems biology

Microarray data analysis: from disarray to consolidation

and consensus

Overview of Tools for Microarray Data Analysis and Comparison

Analysis

Cross-platform

reproducibility

A methodology for global validation of microarray experiments

A study of inter-lab and inter-platform agreement of

DNA microarray data

Analysis of variance components in gene expression data

Application of a correlation correction factor in a

microarray cross-platform reproducibility study

Reproducibility of microarray data: a further analysis

of microarray quality control (MAQC) data

Three microarray platforms: an analysis of their concordance

in profiling gene expression

A framework for significance analysis of gene expression

data using dimension reduction methods

AnovArray: a set of SAS macros for the analysis of variance

of gene expression data

Blind Source Separation and the Analysis of Microarray

Data

Correspondence analysis applied to microarray data

GeneANOVA gene expression analysis of variance

Gene expression variation between mouse inbred strains

Independent Component Analysis: A Tutorial

Linear modes of gene expression determined by independent

component analysis.

Metabolite fingerprinting: detecting biological features

by independent component analysis

Novel approaches to gene expression analysis of active

polyarticular juvenile rheumatoid arthritis

Significance analysis of microarrays applied to the

ionizing radiation response

Statistical Design and the Analysis of Gene Expression

Microarray Data

Variation in tissue-specific gene expression among natural

populations

A comparative review of estimates of the proportion

unchanged genes and the false discovery rate

A note on the false discovery rate and inconsistent

comparisons between experiments.

A simple method for assessing sample sizes in microarray

experiments

Effects of dependence in high-dimensional multiple testing

problems

Empirical Bayes screening of many p-values with applications

to microarray studies

Estimating p-values in small microarray experiments

Classification of microarray data using gene networks

Enrichment or depletion of a GO category within a class

of genes: which test?

Ontological analysis of gene expression data: current

tools, limitations, and open problems

An empirical Bayes approach to inferring large-scale

gene association networks

Analyzing gene expression data in terms of gene sets:

methodological issues

Comparative evaluation of gene-set analysis methods

Pathway level analysis of gene expression using singular

value decomposition

Bayesian meta-analysis models for microarray data: a

comparative study

Can subtle changes in gene expression be consistently

detected with different microarray platforms?

Coexpression Analysis of Human Genes Across Many Microarray

Data Sets

Combining Affymetrix microarray results

Merging two gene-expression studies via cross-platform

normalization

Variation in tissue-specific gene expression among natural

populations

Ranking analysis of F-statistics for microarray data

An adaptive method for cDNA microarray normalization

Can Zipf's law be adapted to normalize microarrays?

Making sense of microarray data distributions

Normalization of single-channel DNA array data by principal

component analysis

Reuse of imputed data in microarray analysis increases

imputation efficiency

Using Generalized Procrustes Analysis (GPA) for normalization

of cDNA microarray data

Biases induced by pooling samples in microarray experiments

Effect of pooling samples on the efficiency of comparative

studies using microarrays

Pooling mRNA in microarray experiments and its effect

on power

Statistical implications of pooling RNA samples for

microarray experiments

Quality

control of microarrays

A Bayesian missing value estimation method for gene

expression profile data

A comparison of background correction methods for two-colour

microarrays

Combining signals from spotted cDNA microarrays obtained

at different scanning intensities

Comparing transformation methods for DNA microarray

data

Correcting for gene-specific dye bias in DNA microarrays

using the method of maximum likelihood

Gaussian mixture clustering and imputation of microarray

data

Microarray image analysis: background estimation using

quantile and morphological filters

Missing-value estimation using linear and non-linear

regression with Bayesian gene selection

Quality determination and the repair of poor quality

spots in array experiments

Scanning microarrays at multiple intensities enhances

discovery of differentially expressed genes

Statistical estimation of gene expression using multiple

laser scans of microarrays

Model based analysis of real-time PCR data from DNA

binding dye protocols

Statistical analysis of real-time PCR data

Statistical significance of quantitative PCR

Analyzing time series gene expression data

Difference-based clustering of short time-course microarray

data with replicates.

Fundamental patterns underlying gene expression profiles:

Simplicity from complexity

Identification of gene expression patterns using planned

linear contrasts

Permutation test for periodicity in short time series

data

A calibration method for estimating absolute expression

levels from microarray data

An analysis of the use of genomic DNA as a universal

reference in two channel DNA microarrays

An experimental evaluation of a loop versus a reference

design for two-channel microarrays

Analysis of Variance for Gene Expression Microarray

Data

Background correction for cDNA microarray images using

the TV+L1 model

Characterizing dye bias in microarray experiments

Effect of various normalization methods on Applied Biosystems

expression array system data

Evaluation of the gene-specific dye bias in cDNA microarray

experiments

Comment on Evaluation of the gene-specific dye bias

in cDNA microarray experiments

Extended analysis of benchmark datasets for Agilent

two-color microarrays

Missing channels in two-colour microarray experiments:

Combining single-channel and two-channel data

Reducing the variability in cDNA microarray image processing

by Bayesian inference

Pre-processing Agilent microarray data

Naoaki

Ono, Shingo Suzuki, Chikara Furusawa, Tomoharu Agata, Akiko Kashiwagi,

Hiroshi Shimizu and Tetsuya Yomo

Motivation: High-density DNA microarrays provide

useful tools to analyze gene expression comprehensively. However, it is

still difficult to obtain accurate expression levels from the observed

microarray data because the signal intensity is affected by complicated

factors involving probetarget hybridization, such as non-linear behavior

of hybridization, non-specific hybridization, and folding of probe and

target oligonucleotides. Various methods for microarray data analysis have

been proposed to address this problem. In our previous report, we presented

a benchmark analysis of probe-target hybridization using artificially synthesized

oligonucleotides as targets, in which the effect of non-specific hybridization

was negligible. The results showed that the preceding models explained

the behavior of probe-target hybridization only within a narrow range of

target concentrations. More accurate models are required for quantitative

expression analysis.

Results: The experiments showed that finiteness

of both probe and target molecules should be considered to explain the

hybridization behavior. In this article, we present an extension of the

Langmuir model that reproduces the experimental results consistently. In

this model, we introduced the effects of secondary structure formation,

and dissociation of the probe-target duplex during washing after hybridization.

The results will provide useful methods for the understanding and analysis

of microarray experiments.

Rafael

A. Irizarry, Zhijin Wu and Harris A. Jaffee

Motivation: In the Affymetrix GeneChip system,

preprocessing occurs before one obtains expression level measurements.

Because the number of competing preprocessing methods was large and growing

we developed a benchmark to help users identify the best method for their

application. A webtool was made available for developers to benchmark their

procedures. At the time of writing over 50 methods had been submitted.

Results: We benchmarked 31 probe set algorithms

using a U95A dataset of spike in controls. Using this dataset, we found

that background correction, one of the main steps in preprocessing, has

the largest effect on performance. In particular, background correction

appears to improve accuracy but, in general, worsen precision. The benchmark

results put this balance in perspective. Furthermore, we have improved

some of the original benchmark metrics to provide more detailed information

regarding precision and accuracy. A handful of methods stand out as providing

the best balance using spike-in data with the older U95A array, although

different experiments on more current arrays may benchmark differently.

Li-Xuan

Qin, Richard P Beyer, Francesca N Hudson, Nancy J Linford, Daryl E Morris

and Kathleen F Kerr

Background: There are currently many different

methods for processing and summarizing probe level data from Affymetrix

oligonucleotide arrays. It is of great interest to validate these methods

and identify those that are most effective. There is no single best way

to do this validation, and a variety of approaches is needed. Moreover,

gene expression data are collected to answer a variety of scientific questions,

and the same method may not be best for all questions. Only a handful of

validation studies have been done so far, most of which rely on spike-in

datasets and focus on the question of detecting differential expression.

Here we seek methods that excel at estimating relative expression. We evaluate

methods by identifying those that give the strongest linear association

between expression measurements by array and the "gold-standard" assay.

Quantitative reverse-transcription polymerase chain reaction (qRT-PCR)

is generally considered the "gold-standard" assay for measuring

gene expression by biologists and is often used to confirm findings from

microarray data. Here we use qRT-PCR measurements to validate methods for

the components of processing oligo array data: background adjustment, normalization,

mismatch adjustment, and probeset summary. An advantage of our approach

over spike-in studies is that methods are validated on a real dataset that

was collected to address a scientific question.

Results: We initially identify three of six

popular methods that consistently produced the best agreement between oligo

array and RT-PCR data for medium- and high-intensity genes. The three methods

are generally known as MAS5, gcRMA, and the dChip mismatch mode. For medium-

and high-intensity genes, we identified use of data from mismatch probes

(as in MAS5 and dChip mismatch) and a sequence-based method of background

adjustment (as in gcRMA) as the most important factors in methods' performances.

However, we found poor reliability for methods using mismatch probes for

low-intensity genes, which is in agreement with previous studies. Conclusion:

We advocate use of sequence-based background adjustment in lieu of mismatch

adjustment to achieve the best results across the intensity spectrum. No

method of normalization or probeset summary showed any consistent advantages.

Peter

B Dallas, Nicholas G Gottardo, Martin J Firth, Alex H Beesley, Katrin Hoffmann,

Philippa A Terry, Joseph R Freitas, Joanne M Boag, Aaron J Cummings and

Ursula R Kees

Background: The use of microarray technology

to assess gene expression levels is now widespread in biology. The validation

of microarray results using independent mRNA quantitation techniques remains

a desirable element of any microarray experiment. To facilitate the comparison

of microarray expression data between laboratories it is essential that

validation methodologies be critically examined. We have assessed the correlation

between expression scores obtained for 48 human genes using oligonucleotide

microarrays and the expression levels for the same genes measured by quantitative

real-time RT-PCR (qRT-PCR).

Results: Correlations with qRT-PCR data were

obtained using microarray data that were processed using robust multi-array

analysis (RMA) and the MAS 5.0 algorithm. Our results indicate that when

identical transcripts are targeted by the two methods, correlations between

qRT-PCR and microarray data are generally strong (r = 0.89). However, we

observed poor correlations between qRT-PCR and RMA or MAS 5.0 normalized

microarray data for 13% or 16% of genes, respectively.

Conclusion: These results highlight the complementarity

of oligonucleotide microarray and qRTPCR technologies for validation of

gene expression measurements, while emphasizing the continuing requirement

for caution in interpreting gene expression data.

R. Sasik,

E. Calvo and J. Corbeil

High-density

oligonucleotide arrays (GeneChip, Affymetrix, Santa Clara, CA) have become

a standard research tool in many areas of biomedical research. They quantitatively

monitor the expression of thousands of genes simultaneously by measuring

fluorescence from gene-specific targets or probes. The relationship between

signal intensities and transcript abundance as well as normalization issues

have been the focus of much recent attention (Hill et al., 2001; Chudin

et al., 2002; Naef et al., 2002a). It is desirable that a researcher has

the best possible analytical tools to make the most of the information

that this powerful technology has to offer. At present there are three

analytical methods available: the newly released Affymetrix Microarray

Suite 5.0 (AMS) software that accompanies the GeneChip product, the method

of Li and Wong (LW; Li and Wong, 2001), and the method of Naef et al. (FN;

Naef et al., 2001). The AMS method is tailored for analysis of a single

microarray, and can therefore be used with any experimental design. The

LW method on the other hand depends on a large number of microarrays in

an experiment and cannot be used for an isolated microarray, and the FN

method is particular to paired microarrays, such as resulting from an experiment

in which each treatment sample has a corresponding control sample.

Our focus is on analysis of experiments in which there is a series of samples.

In this case only the AMS, LW, and the method described in this paper can

be used. The present method is model-based, like the LWmethod, but assumes

multiplicative not additive noise, and employs elimination of statistically

significant outliers for improved results. Unlike LW and AMS, we do not

assume probe-specific background (measured by the so-called mismatch probes).

Rather, we assume uniform background, whose level is estimated using both

the mismatch and perfect match probe intensities.

Kathe

E. Bjork and Karen Kafadar

Motivation: Affymetrix GeneChips are common

30 profiling platforms for quantifying gene expression. Using publicly

available datasets of expression profiles from human and mouse experiments,

we sought to characterize features of GeneChip data to better compare and

evaluate analyses for differential expression, regulation and clustering.

We uncovered an unexpected order dependence in expression data that holds

across a variety of chips in both human and mouse data.

Results: Order dependence among GeneChips

affected relative expression measures pre-processed and normalized with

the Affymetrix MAS5.0 algorithm and the robust multi-array average summarization

method. The effect strongly influenced detection calls and tests for differential

expression and can potentially significantly bias experimental results

based on GeneChip profiling.

Nandini

Raghavan, An M.I.M. De Bondt, Willem Talloen, Dieder Moechars, Hinrich

W.H. Göhlmann and Dhammika Amaratunga

Probe-level

data from Affymetrix GeneChips can be summarized in many ways to produce

probe-set level gene expression measures (GEMs). Disturbingly, the different

approaches not only generate quite different measures but they could also

yield very different analysis results. Here, we explore the question of

how much the analysis results really do differ, first at the gene level,

then at the biological process level. We demonstrate that, even though

the gene level results may not necessarily match each other particularly

well, as long as there is reasonably strong differentiation between the

groups in the data, the various GEMs do in fact produce results that are

similar to one another at the biological process level. Not only that the

results are biologically relevant. As the extent of differentiation drops,

the degree of concurrence weakens, although the biological relevance of

findings at the biological process level may yet remain.

Alexander

Statnikov, Constantin F. Aliferis, Ioannis Tsamardinos, Douglas Hardin

and Shawn Levy

Motivation: Cancer diagnosis is one of the most

important emerging clinical applications of gene expression microarray

technology.We are seeking to develop a computer system for powerful and

reliable cancer diagnostic model creation based on microarray data. To

keep a realistic perspective on clinical applications we focus on multicategory

diagnosis. To equip the system with the optimum combination of classifier,

gene selection and cross-validation methods, we performed a systematic

and comprehensive evaluation of several major algorithms for multicategory

classification, several gene selection methods, multiple ensemble classifier

methods and two cross-validation designs using 11 datasets spanning 74

diagnostic categories and 41 cancer types and 12 normal tissue types.

Results: Multicategory support vector machines

(MC-SVMs) are the most effective classifiers in performing accurate cancer

diagnosis from gene expression data. The MC-SVM techniques by Crammer and

Singer, Weston and Watkins and one-versus-rest were found to be the best

methods in this domain. MC-SVMs outperform other popular machine learning

algorithms, such as k-nearest neighbors, backpropagation and probabilistic

neural networks, often to a remarkable degree. Gene selection techniques

can significantly improve the classification performance of both MC-SVMs

and other non-SVM learning algorithms. Ensemble classifiers do not generally

improve performance of the best non-ensemble models. These results guided

the construction of a software system GEMS (Gene Expression Model Selector)

that automates high-quality model construction and enforces sound optimization

and performance estimation procedures. This is the first such system to

be informed by a rigorous comparative analysis of the available algorithms

and datasets.

Maïa Chanrion, Hélène Fontaine, Carmen Rodriguez, Vincent Negre, Frédéric Bibeau, Charles Theillet, Alain Hénaut and Jean-Marie Darbon

Background: Current histo-pathological prognostic

factors are not very helpful in predicting the clinical outcome of breast

cancer due to the disease's heterogeneity. Molecular profiling using a

large panel of genes could help to classify breast tumours and to define

signatures which are predictive of their clinical behaviour.

Methods: To this aim, quantitative RT-PCR

amplification was used to study the RNA expression levels of 47 genes in

199 primary breast tumours and 6 normal breast tissues. Genes were selected

on the basis of their potential implication in hormonal sensitivity of

breast tumours. Normalized RT-PCR data were analysed in an unsupervised

manner by pairwise hierarchical clustering, and the statistical relevance

of the defined subclasses was assessed by Chi2 analysis. The robustness

of the selected subgroups was evaluated by classifying an external and

independent set of tumours using these Chi2-defined molecular signatures.

Results: Hierarchical clustering of gene

expression data allowed us to define a series of tumour subgroups that

were either reminiscent of previously reported classifications, or represented

putative new subtypes. The Chi2 analysis of these subgroups allowed us

to define specific molecular signatures for some of them whose reliability

was further demonstrated by using the validation data set. A new breast

cancer subclass, called subgroup 7, that we defined in that way, was particularly

interesting as it gathered tumours with specific bioclinical features including

a low rate of recurrence during a 5 year follow-up.

Conclusion: The analysis of the expression of

47 genes in 199 primary breast tumours allowed classifying them into a

series of molecular subgroups. The subgroup 7, which has been highlighted

by our study, was remarkable as it gathered tumours with specific bioclinical

features including a low rate of recurrence. Although this finding should

be confirmed by using a larger tumour cohort, it suggests that gene expression

profiling using a minimal set of genes may allow the discovery of new subclasses

of breast cancer that are characterized by specific molecular signatures

and exhibit specific bioclinical features.

Frank

De Smet, Janick Mathys, Kathleen MarchaI, Gert Thijs, Bart De Moor and

Yves Moreau

Motivation: Microarray experiments generate

a considerable amount of data, which analyzed properly help us gain a huge

amount of biologically relevant information about the global cellular behaviour.

Clustering (grouping genes with similar expression profiles) is one of

the first steps in data analysis of high-throughput expression measurements.

A number of clustering algorithms have proved useful to make sense of such

data. These classical algorithms, though useful, suffer from several drawbacks

(e.g. they require the predefinition of arbitrary parameters like the number

of clusters; they force every gene into a cluster despite a low correlation

with other cluster members). ln the following we describe a novel adaptive

quality-based clustering algorithm that tackles some of these drawbacks.

Results: We propose a heuristic iterative

two-step algo- rithm: First, we find in the high-dimensional representation

of the data a sphere where the 'density' of expression profiles is locally

maximal (based on a preliminary estimate of the radius of the cluster-quality-based

approach). ln a second step, we derive an optimal radius of the cluster

(adaptive approach) so that only the significantly co-expressed genes are

included in the cluster. This estimation is achieved by fitting a model

to the data using an EM-algorithm. By inferring the radius from the data

itself, the biologist is freed from finding an optimal value for this radius

by trial-and-error. The computational complexity .of this method is approximately

linear in the number of gene expression profiles in the data set. Finally,

our method is successfully validated using existing data sets.

R. Keira

Curtis and Martin D. Brand

Motivation: Microarray experiments measure complex

changes in the abundance of many mRNAs under different conditions. Current

analysis methods cannot distinguish between direct and indirect effects

on expression, or calculate the relative importance of mRNAs in effecting

responses.

Results: Application of modular regulation

analysis to microarray data reveals and quantifies which mRNA changes are

important for cellular responses. The mRNAs are clustered, and then we

calculate how perturbations alter each cluster and how strongly those clusters

affect an output response. The product of these values quantifies how an

input changes a response through each cluster.

Two published

datasets are analysed. Two mRNA clusters transmit most of the response

of yeast doubling time to galactose; one contains mainly galactose metabolic

genes, and the other a regulatory gene. Analysis of the response of yeast

relative fitness to 2-deoxy-d-glucose reveals that control is distributed

between several mRNA clusters, but experimental error limits statistical

significance.

R. L.

Somorjai, B. Dolenko and R. Baumgartner

Motivation: Two practical realities constrain

the analysis of microarray data, mass spectra from proteomics, and biomedical

infrared or magnetic resonance spectra. One is the curse of dimensionality:

the number of features characterizing these data is in the thousands or

tens of thousands. The other is the curse of dataset sparsity: the number

of samples is limited. The consequences of these two curses are far-reaching

when such data are used to classify the presence or absence of disease.

Results: Using very simple classifiers, we

show for several publicly available microarray and proteomics datasets

how these curses influence classification outcomes. In particular, even

if the sample per feature ratio is increased to the recommended 510 by

feature extraction/reduction methods, dataset sparsity can render any classification

result statistically suspect. In addition, several optimal feature sets

are typically identifiable for sparse datasets, all producing perfect classification

results, both for the training and independent validation sets. This non-uniqueness

leads to interpretational difficulties and casts doubt on the biological

relevance of any of these optimal feature sets. We suggest an approach

to assess the relative quality of apparently equally good classifiers.

Michael B.

Eisen, Paul T. Spellman, Patrick O. Brown, and David Botstein

A system

of cluster analysis for genome-wide expression data from DNA microarray

hybridization is described that uses standard statistical algorithms to

arrange genes according to similarity in pattern of gene expression. The

output is displayed graphically, conveying the clustering and the underlying

expression data simultaneously in a form intuitive for biologists. We have

found in the budding yeast Saccharomyces cerevisiae that clustering gene

expression data groups together efficiently genes of known similar function,

and we find a similar tendency in human data. Thus patterns seen in genome-wide

expression experiments can be interpreted as indications of the status

of cellular processes. Also, coexpression of genes of known function with

poorly characterized or novel genes may provide a simple means of gaining

leads to the functions of many genes for which information is not available

currently.

Susmita

Datta and Somnath Datta

Motivation: With the advent of microarray chip

technology, large data sets are emerging containing the simultaneous expression

levels of thousands of genes at various time points during a biological

process. Biologists are attempting to group genes based on the temporal

pattern of their expression levels. While the use of hierarchical clustering

(UPGMA) with correlation distance has been the most common in the microarray

studies, there are many more choices of clustering algorithms in pattern

recognition and statistics literature. At the moment there do not seem

to be any clear-cut guidelines regarding the choice of a clustering algorithm

to be used for grouping genes based on their expression profiles.

Results: In this paper, we consider six clustering

algorithms (of various flavors!) and evaluate their performances on a well-known

publicly available microarray data set on sporulation of budding yeast

and on two simulated data sets. Among other things, we formulate three

reasonable validation strategies that can be used with any clustering algorithm

when temporal observations or replications are present. We evaluate each

of these six clustering methods with these validation measures. While the best method

is dependent on the exact validation strategy and the number of clusters

to be used, overall Diana appears to be a solid performer. Interestingly,

the performance of correlation-based hierarchical clustering and model-based

clustering (another method that has been advocated by a number of researchers)

appear to be on opposite extremes, depending on what validation measure

one employs. Next it is shown that the group means produced by Diana are

the closest and those produced by UPGMA are the farthest from a model profile

based on a set of hand-picked genes.

Daehee

Hwang, William A. Schmitt, George Stephanopoulos and Gregory Stephanopoulos

Motivation: Transcriptional profiling using

microarrays can reveal important information about cellular and tissue

expression phenotypes, but these measurements are costly and time consuming.

Additionally, tissue sample availability poses further constraints on the

number of arrays that can be analyzed in connection with a particular disease

or state of interest. It is therefore important to provide a method for

the determination of the minimum number of microarrays required to separate,

with statistical reliability, distinct disease states or other physiological

differences.

Results: Power analysis was applied to estimate

the minimum sample size required for two-class and multi-class discrimination.

The power analysis algorithm calculates the appropriate sample size for

discrimination of phenotypic subtypes in a reduced dimensional space obtained

by Fisher discriminant analysis (FDA). This approach was tested by applying

the algorithm to existing data sets for estimation of the minimum sample

size required for drawing certain conclusions on multi-class distinction

with statistical reliability. It was confirmed that when the minimum number

of samples estimated from power analysis is used, group means in the FDA

discrimination space are statistically different.

J. M.

Deutsch

Motivation: Microarray data has been shown recently

to be efficacious in distinguishing closely related cell types that often

appear in different forms of cancer, but is not yet practical clinically.

However, the data might be used to construct a minimal set of marker genes

that could then be used clinically by making antibody assays to diagnose

a specific type of cancer. Here a replication algorithm is used for this

purpose. It evolves an ensemble of predictors, all using different combinations

of genes to generate a set of optimal predictors.

Results: We apply this method to the leukemia

data of the Whitehead/MIT group that attempts to differentially diagnose

two kinds of leukemia, and also to data of Khan et al. to distinguish four

different kinds of childhood cancers. In the latter case we were able to

reduce the number of genes needed from 96 to less than 15, while at the

same time being able to classify all of their test data perfectly. We also

apply this method to two other cases, Diffuse large B-cell lymphoma data

(Shipp et al., 2002), and data of Ramaswamy et al. on multiclass diagnosis

of 14 common tumor types.

Ka Yee

Yeung, Mario Medvedovic and Roger E Bumgarner

Background: Cluster analysis is often used to

infer regulatory modules or biological function by associating unknown

genes with other genes that have similar expression patterns and known

regulatory elements or functions. However, clustering results may not have

any biological relevance.

Results: We applied various clustering algorithms

to microarray datasets with different sizes, and we evaluated the clustering

results by determining the fraction of gene pairs from the same clusters

that share at least one known common transcription factor. We used both

yeast transcription factor databases (SCPD, YPD) and chromatin immunoprecipitation

(ChIP) data to evaluate our clustering results. We showed that the ability

to identify co-regulated genes from clustering results is strongly dependent

on the number of microarray experiments used in cluster analysis and the

accuracy of these associations plateaus at between 50 and 100 experiments

on yeast data. Moreover, the model-based clustering algorithm MCLUST consistently

outperforms more traditional methods in accurately assigning co-regulated

genes to the same clusters on standardized data.

Conclusions: Our results are consistent with

respect to independent evaluation criteria that strengthen our confidence

in our results. However, when one compares ChIP data to YPD, the false-negative

rate is approximately 80% using the recommended p-value of 0.001. In addition,

we showed that even with large numbers of experiments, the false-positive

rate may exceed the truepositive rate. In particular, even when all experiments

are included, the best results produce clusters with only a 28% true-positive

rate using known gene transcription factor interactions.

Daniel

Berrar, Ian Bradbury and Werner Dubitzky

Background: Various statistical and machine

learning methods have been successfully applied to the classification of

DNA microarray data. Simple instance-based classifiers such as nearest

neighbor (NN) approaches perform remarkably well in comparison to more

complex models, and are currently experiencing a renaissance in the analysis

of data sets from biology and biotechnology. While binary classification

of microarray data has been extensively investigated, studies involving

multiclass data are rare. The question remains open whether there exists

a significant difference in performance between NN approaches and more

complex multiclass methods. Comparative studies in this field commonly

assess different models based on their classification accuracy only; however,

this approach lacks the rigor needed to draw reliable conclusions and is

inadequate for testing the null hypothesis of equal performance. Comparing

novel classification models to existing approaches requires focusing on

the significance of differences in performance.

Results: We investigated the performance

of instance-based classifiers, including a NN classifier able to assign

a degree of class membership to each sample. This model alleviates a major

problem of conventional instance-based learners, namely the lack of confidence

values for predictions. The model translates the distances to the nearest

neighbors into 'confidence scores'; the higher the confidence score, the

closer is the considered instance to a predefined class. We applied the

models to three real gene expression data sets and compared them with state-ofthe-

art methods for classifying microarray data of multiple classes, assessing

performance using a statistical significance test that took into account

the data resampling strategy. Simple NN classifiers performed as well as,

or significantly better than, their more intricate competitors.

Conclusion: Given its highly intuitive underlying

principles simplicity, ease-of-use, and robustness the k-NN classifier

complemented by a suitable distance-weighting regime constitutes an excellent

alternative to more complex models for multiclass microarray data sets.

Instance-based classifiers using weighted distances are not limited to

microarray data sets, but are likely to perform competitively in classifications

of high-dimensional biological data sets such as those generated by high-throughput

mass spectrometry.

Samuel

Kaski, Janne Nikkilä, Merja Oja, Jarkko Venna, Petri Törönen and Eero Castrén

Background: Conventionally, the first step in

analyzing the large and high-dimensional data sets measured by microarrays

is visual exploration. Dendrograms of hierarchical clustering, selforganizing

maps (SOMs), and multidimensional scaling have been used to visualize similarity

relationships of data samples. We address two central properties of the